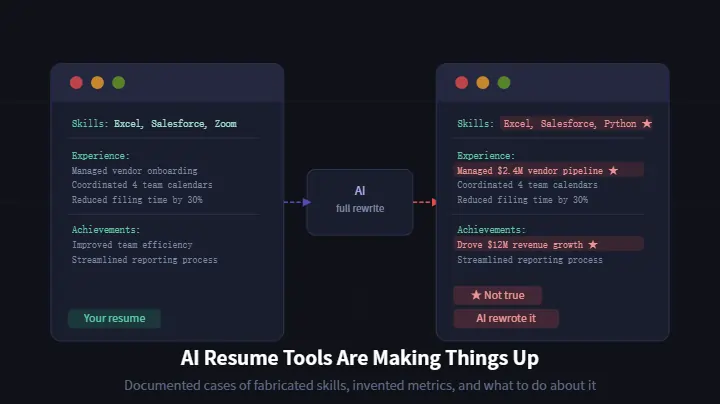

AI Resume Tools Are Making Things Up — And Users Are Paying the Price

AI resume tools are confidently adding skills you don't have and metrics you never hit. Here's the documented proof, why it happens, and how to protect yourself before a recruiter catches it.

You uploaded your resume. You pasted the job description. The AI did its thing, and thirty seconds later you had a polished, tailored document ready to send.

There's just one problem. Some of those skills? You don't have them. Some of those metrics? You never achieved them. The AI didn't know that — and it didn't care.

This isn't a hypothetical warning about what AI could do. It's a documented pattern happening right now, and real job seekers are finding out the hard way.

As someone who builds AI career tools and spends time in job-seeker communities watching these problems unfold, I want to be upfront about something: we have a stake in this conversation. We built the ATS Resume Checker you'll see mentioned at the end of this article. But the fabrication problem I'm describing is real, it affects every AI resume tool on the market — and job seekers deserve a clear-eyed look at what's actually happening before they hit send.

The Python Problem

Last year, a user on r/ResumeCoverLetterTips shared something that stopped the thread cold. She had run her resume through a popular AI builder and was about to hit send when she noticed something odd in her skills section: "Python."

She has never written a line of code in her life.

The tool had added it confidently, without flagging it as a suggestion or asking for confirmation. It simply decided that Python was relevant to the job she was applying for and inserted it as if it were a fact.

"It is scary how confidently these tools invent details," she wrote. "Getting caught with false information on a resume would be a nightmare."

She caught it. Not everyone does.

Why This Happens: The Technical Explanation

The hallucination problem isn't random — it's structural. And understanding why it happens is the first step to protecting yourself.

As a data analyst who works with LLM (Large Language Model) outputs professionally, I recognize this as a predictable consequence of how these models work. LLMs don't retrieve facts — they generate probabilistically plausible text. When given a job description and a resume, a model trained to "complete" the tailoring task will produce whatever sequence of words is most statistically likely to appear in a strong resume for that role — regardless of whether those words describe the candidate's actual experience.

A tool builder who has worked on this exact problem explained the mechanics clearly in the same thread. Most AI resume tools that offer "full rewrites" are doing something that sounds helpful but is fundamentally risky: they're using the job description as a prompt to generate content from scratch.

The AI isn't editing your resume. It's writing a new one that sounds like your resume, using the job description as a blueprint. When there are gaps between what you have and what the job requires, the AI fills them in — confidently, fluently, and completely without basis in your actual experience.

"The approach that actually works," he explained, "is keeping the AI's job narrow. Instead of 'rewrite this resume,' the prompt should be 'here is the original resume and here is the job description — identify the gaps and suggest where existing experience could be reworded to match.' The AI is editing, not inventing."

The other critical guardrail: letting you approve each change before it applies. Tools that apply changes wholesale — all at once, without review — remove the only safety net between you and a resume full of fabrications.

Documented Cases: What Users Actually Found

The Python incident isn't an outlier. Here's what users and independent testers have found across multiple tools in a systematic comparison published on Reddit:

Wonsulting / ResumAI

In a test where the same mid-career resume was run through five leading AI tailoring tools against the same job description, Wonsulting's ResumAI inserted "$12 million in revenue" into the candidate's bullet points. There was no revenue figure of any kind in the original resume. The tool invented a specific, impressive-sounding metric from nothing.

As a CMA, I can tell you exactly why this is dangerous beyond the obvious. Financial metrics on a resume aren't just talking points — they're claims that background verification services, financial auditors, and experienced hiring managers are specifically trained to scrutinize. A $12 million revenue figure attached to a role where that scale is implausible won't just fail to impress; it signals to any experienced financial professional that something is wrong with the entire document.

AI Apply

Multiple users have reported a different but equally damaging problem: AI Apply's automated rewriting strips out metrics you actually do have. One user who tested the tool found that "automated rewrites sometimes dropped my best numbers." You go in with real, verifiable achievements and come out with a sanitized version that sounds professional but says nothing.

The Pattern Across Tools

A resume writer with years of professional experience put it bluntly when asked about AI tools in a separate Reddit thread: "They are slop." Her assessment wasn't about formatting or tone — it was about the fundamental problem of AI-generated content that sounds authoritative but lacks the one thing a resume needs: accuracy.

A recruiter in the same thread made the same point from the hiring side: "Rewritten vagueness is still vagueness. Without proper context, AI just extrapolates, and it's very easy to understand for an experienced recruiter."

The Risk You're Taking When You Send It Unchecked

Here's what the worst-case scenario actually looks like.

You submit a resume with a fabricated skill or an invented metric. It passes the initial screen — after all, the AI made sure the keywords were there. You get a call. The recruiter asks you to walk through your experience. You stumble because you're trying to remember something that never happened.

Even before the call, there's the LinkedIn check. Recruiters routinely cross-reference resumes against LinkedIn profiles. If your resume claims Python and your LinkedIn doesn't mention it anywhere, that's a flag. If your resume claims you grew revenue by $12 million and your job titles don't suggest that's plausible, that's another flag.

Background verification is increasingly sophisticated. Credentials get checked. Employment dates get verified. Numbers get scrutinized — especially financial ones. One fabricated detail doesn't just cost you that job. It raises questions about everything else on the resume.

What to Do Instead

The solution isn't to abandon AI tools entirely. It's to use them the way they were meant to be used: as editors, not authors.

Narrow the AI's job. Use AI to identify keyword gaps between your resume and the job description. Use it to suggest ways to rephrase existing experience more effectively. Don't use it to generate experience you don't have.

Verify everything before sending. Go through the final document and check every skill, every metric, every tool, every achievement. If you can't speak to it in an interview, it shouldn't be there.

The verification checklist:

- Every skill listed — have you actually used it?

- Every number or metric — can you explain exactly how it was achieved?

- Every tool or technology — could you answer questions about it?

- Every job title and date — does it match your employment records?

This takes ten minutes. It's worth it.

One More Check Before You Send

Even after you've removed fabrications and verified your content, there's still the question of whether your resume will actually be read.

Formatting issues — tables, columns, graphics, non-standard fonts — can cause your document to fail ATS parsing entirely, regardless of how accurate the content is. A resume full of true, relevant, well-written information still needs to reach a human to do its job.

Our ATS Resume Checker does one specific thing: it checks whether your resume's formatting will survive automated screening. It doesn't rewrite your content, suggest new skills, or alter your bullet points — which means it doesn't carry the fabrication risk we've been discussing. It's a formatting and keyword gap check, nothing more.

Before submitting to any role, running your resume through takes two minutes and tells you whether the structure is going to work against you. Then focus your energy on making sure every word in it is something you can stand behind.

The Bottom Line

AI resume tools can save you time. They can surface keywords you missed, suggest stronger phrasing for existing experience, and help you understand where your resume doesn't match a job description.

But the tools that do full rewrites — the ones that take over your resume wholesale and hand you back something that sounds polished — are producing documents that may include skills you don't have, metrics you never hit, and experience you can't explain.

The job seeker who added Python to her skills section caught it before sending. The $12 million revenue figure made it into a tested submission. The question isn't whether these tools make things up — they do, and the pattern is documented across multiple platforms.

The question is whether you catch it before a recruiter does.

Ready to check your resume before it goes out? Use our ATS Resume Checker to verify your formatting in under two minutes — then make sure every word in it is something you can stand behind.

About the Author

Randy (Qinghao) Xia, CMA is a Senior Data Analyst with over 7 years of experience across financial analysis and data analytics. He holds a Master of Science in Data Analytics & Visualization from Yeshiva University, a Master of Accountancy from Missouri Southern State University, and the Certified Management Accountant (CMA) designation. He currently works at Progressive Leasing and writes about the intersection of AI tools and job searching at ai-coverletter-generator.com.Connect with Randy on LinkedIn

Sources: r/ResumeCoverLetterTips public discussion threads, March 2026. Direct links to source threads are provided inline throughout this article.